In the world of social impact, corporate governance, and global development, billions of dollars are spent on Monitoring and Evaluation (M&E). Yet, traditional evaluation methods often fall into a dangerous trap: they measure the what, but entirely fail to explain the how.

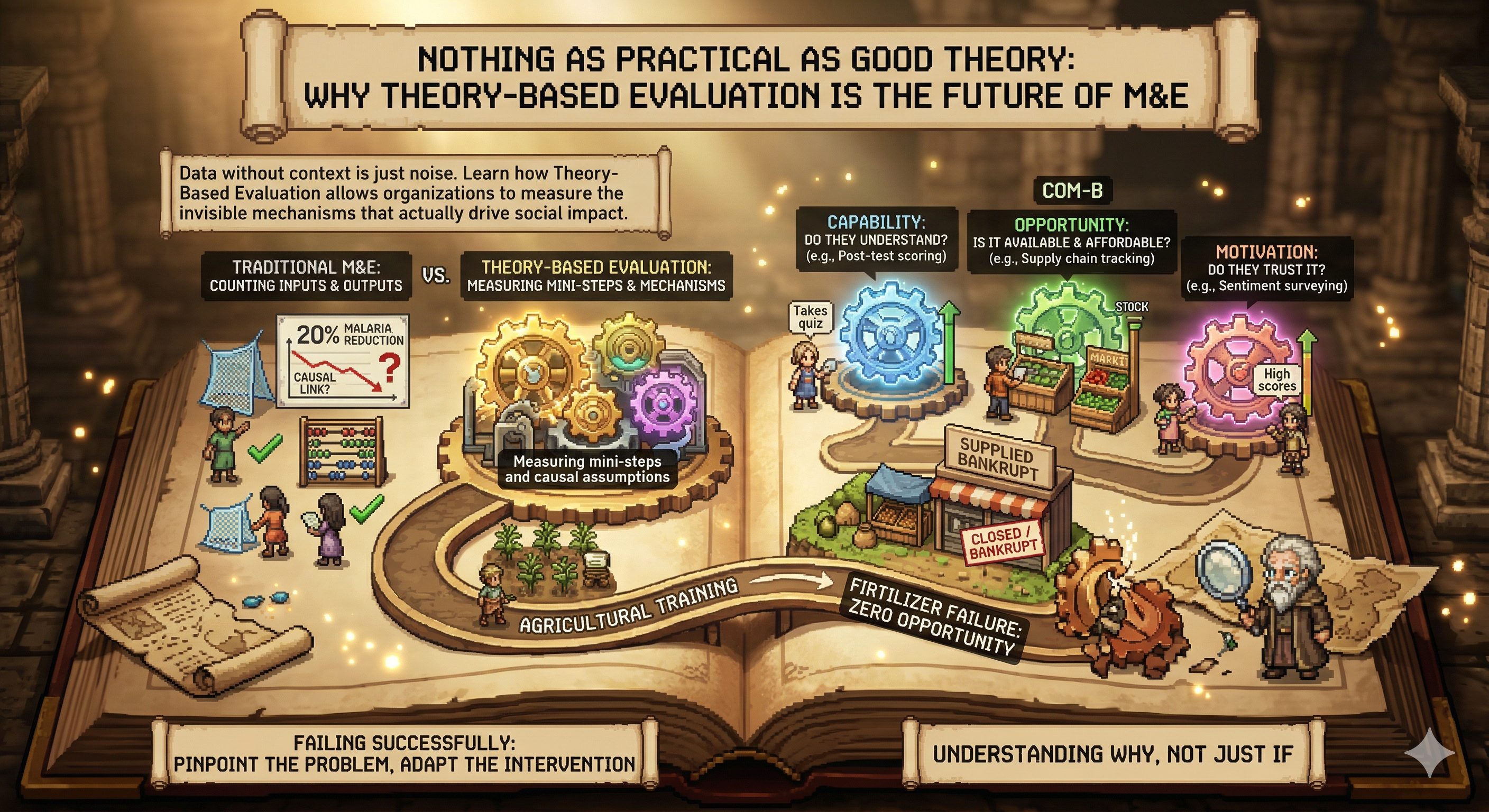

If a program distributes 10,000 mosquito nets (Output) and malaria rates drop by 20% (Impact), a standard evaluation claims success. But did the nets cause the drop? Did people actually sleep under them? Was the drop simply due to an unusually dry mosquito season? Standard input-output evaluation cannot answer these questions.

This is the exact problem that Carol Weiss addressed in her seminal 1995 paper, "Nothing as Practical as Good Theory: Exploring Theory-Based Evaluation for Comprehensive Community Initiatives".

The Black Box of Evaluation

Weiss argued that most social interventions operate as a "black box." Money goes in, and (hopefully) good results come out. But the actual mechanisms of change—the human behaviors, the shifting social norms, the psychological adaptations—remain unmeasured and invisible.

Theory-Based Evaluation (TBE) changes this paradigm. Instead of just measuring the final outcome, TBE measures the underlying Theory of Change itself. It demands that we articulate the specific mini-steps and causal assumptions connecting an Activity to an Impact, and then we measure those assumptions.

Integrating COM-B as the Measurement Metric

To conduct a true Theory-Based Evaluation, you need a way to measure the "mini-steps" of human behavior. This is where integrating the COM-B model becomes an absolute superpower for evaluators.

If your Theory of Change assumes that "Providing agricultural training (Activity) will lead to farmers adopting new fertilizer (Outcome)," a traditional M&E framework would simply count the number of attendees at the training, and then check fertilizer sales a year later.

A Theory-Based Evaluation utilizing COM-B would measure the transition states. The M&E team would track:

- Capability metrics: Did the farmers actually understand the training? (Post-test scoring).

- Opportunity metrics: Is the new fertilizer physically available in the local market at a price they can afford? (Supply chain tracking).

- Motivation metrics: Do the farmers trust the new method more than their generational habits? (Sentiment surveying).

Failing Successfully

By measuring the COM-B variables as part of a Theory-Based Evaluation, an organization gains the ability to fail successfully.

If the fertilizer is not adopted, the organization does not have to scrap the entire program. The TBE data might reveal that Capability and Motivation were high (the training worked, the farmers wanted to do it), but Opportunity was zero because the local supplier went bankrupt.

The evaluation pinpointed the exact point of failure in the causal chain. The intervention can be swiftly adapted—perhaps by shifting budget from "more training" to "supply chain subsidization."

As Weiss posited three decades ago, a well-articulated theory is the most practical tool an organization can have. By defining our logic with ToC and measuring our assumptions with COM-B, we stop proving if a program worked, and finally understand why.